Research

Texas Robotics provides world-class education and pursues innovative research emphasizing long-term autonomy and human-robot interaction while leveraging UT Austin’s breadth to support a broad range of industrial applications.

Texas Robotics Laboratories

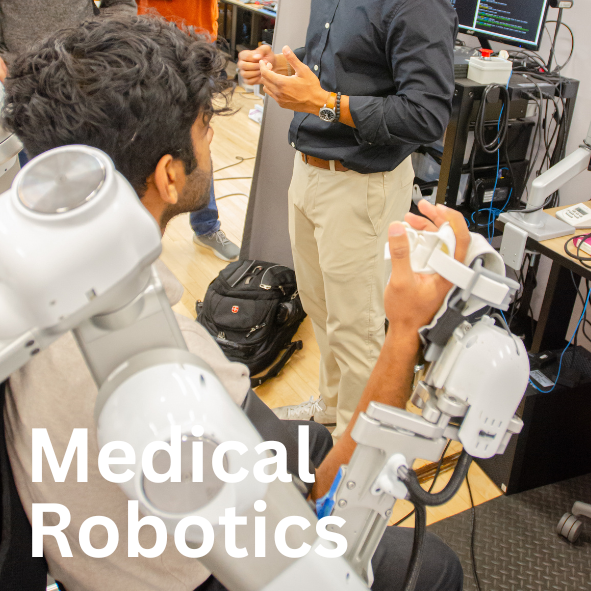

The ARTS lab develops high dexterity and situationally aware continuum manipulators, soft robots, and instruments specially designed for less invasive treatment of various surgical interventions.

The SAHM Lab develops and applies mechanical and biomedical engineering techniques to positively influence the neuromechanics of able and disabled individuals. We address this challenge through the informed design and control of wearable assistive technologies.

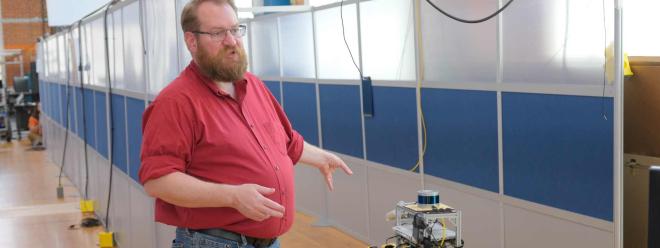

The AMRL performs research in robotics to continually make robots more autonomous, accurate, robust, and efficient in real-world unstructured environments.

The Center for Autonomy's research is on the theoretical and algorithmic aspects of design and verification of autonomous systems. It embraces the fact that autonomy does not fit traditional disciplinary boundaries and has made numerous contributions to the intersection of formal methods, controls, and learning.

The focus of our research is on the direct use of human brain signals for human-robot interaction and control of neuroprostheses. The overarching objective of our research is to bring brain-machine interfaces (BMI) out of the laboratory to augment human capabilities, recover from insults to our central nervous system, and facilitate user’s acquisition of BMI skills.

The CLeAR lab focuses on the intersection between control theory, machine learning, and game theory to design high-performance, interactive autonomous robots.

The Human Centered Robotics lab designs humanoid robots and researches bipedal locomotion.

The long-term goal of the HeRo Lab is to develop robotic systems that are genuinely collaborative partners with human operators, focusing on technology for surgical intervention and medical training.

The Learning Agents Research Group pertain to machine learning (especially reinforcement learning) and multiagent systems.

LWR researches artificial intelligence and human-robot interaction. Significant to my work at UT is the development of comprehensive systems and enabling technologies for general purpose service robots.

The MERGe lab is aiming to design robot materials in a way that matches their intended tasks, studying how the geometry and structure of a material can affect a robot’s end performance. By carefully designing these materials, they’ve been able to create unique and effective robot bodies that can be further improved through advanced computer modeling and optimization.

The Nuclear and Applied Robotics Group develops and deploys advanced robotics in hazardous environments in order to minimize risk for the human operator.

The Lab focuses on the development of robotic devices, based on biomechanical analyses, to assist in rehabilitation, to improve prostheses design, and to provide fitness opportunities for the severely disabled.

This lab explores the mechanisms that enable intelligence in embodied agents. Inspired by biological intelligence, we developed robotic algorithms that improve robot autonomy in perception, control, knowledge representation and decision making through learning. Our goal is to create robotic helpers that enhance human everyday life.

The RPL lab aims to build general-purpose robot autonomy in the wild. We develop intelligent algorithms for robots and embodied agents to reason about and interact with the real world.

The Robotics, Sensing and Networks Laboratory was established in 2005 with the goal of developing robotic systems that can operate in dynamic and complex environments. Such systems often involve multiple robots, and perform tasks involving sensing and communication. We call them Robotic Sensor Networks.

The Swarm Lab works on collaborative perception, learning, and control between fleets of networked robots. We also work on robust computer vision and model predictive control for drones. Our research spans optimal control, energy-efficient deep learning, 5G wireless networking, and computer vision.